Building Runtime in 3 weeks with $100 of GPT-4

Before this project I'd never touched NextJS, Vercel, Typescript, or Supabase. I'd played with React a little. Never deployed a modern frontend or an edge-function.

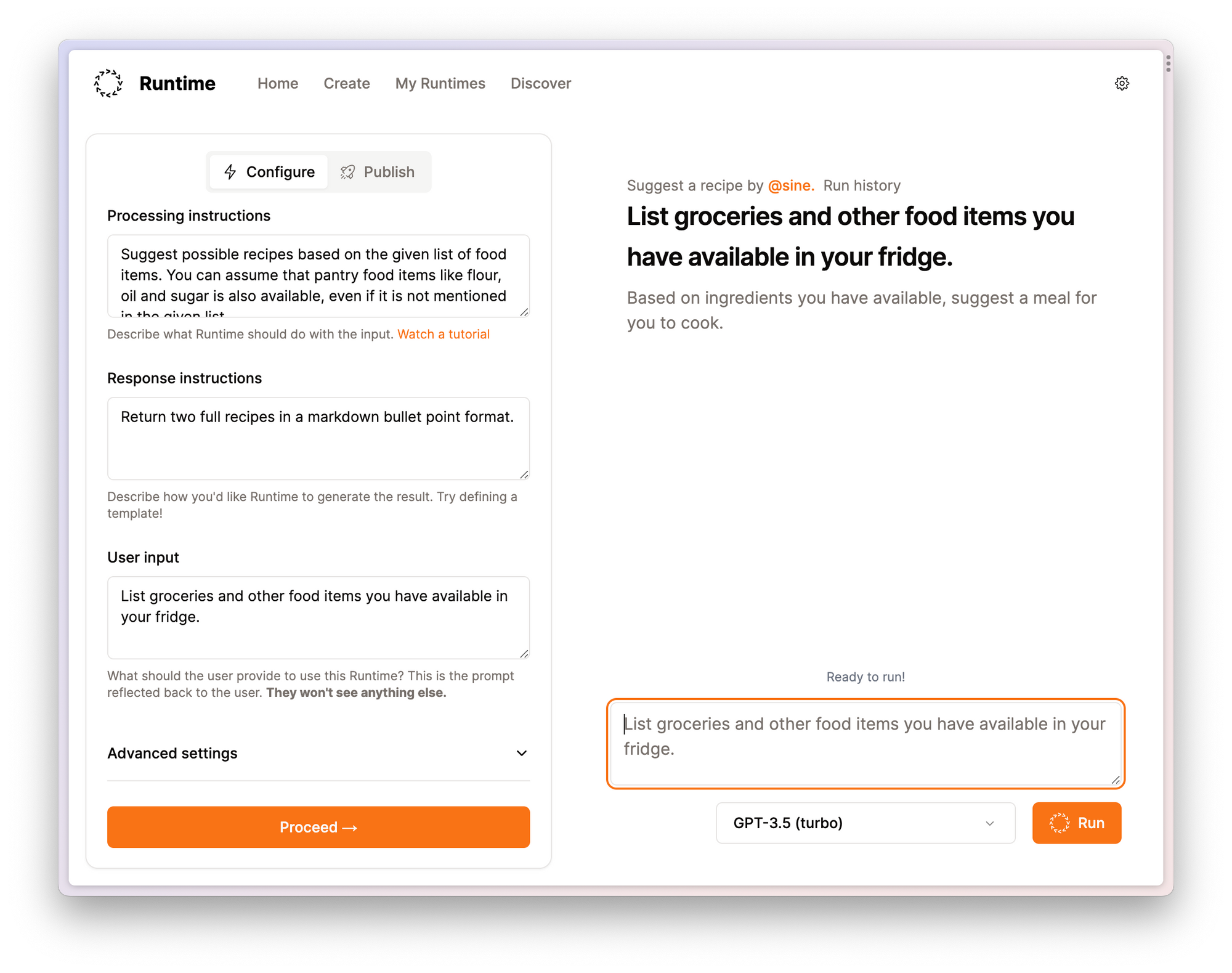

With the help of GPT-4 and a bit of patience, I built Runtime, a small web-app that makes it easy to template prompts that you'd normally be copy-pasting into ChatGPT. (Demo video)

Getting started

My goal was to build something from scratch. I had some rules:

- No design up-front. Design it in the browser.

- Something to do with the OpenAI API. Scratch my own itch.

- Finish it by the end of September (3 weeks).

A great tutor leads to a mindset shift

I started by just asking ChatGPT how to accomplish things, which was a great way to get started.

It is a great teacher. I describe what I want to accomplish, and I learn to use the tools it suggests as I need them. I've never been so good at studying - I want to learn by doing, and this allowed me to do just that.

If I had questions about how something worked, I'd get answers in the context of my specific application, and the code I'd written. No foo and bar here. I could ask deeper and deeper questions. It's an excellent tutor.

I'm really impressed with GPT-4 for helping me debug things.

— @Lloyd (@Lloyd) September 23, 2023

I spent hours fiddling with this yesterday, and finally managed to get to the bottom of it with GPT-4 explaining exactly how to debug it.

Best of all: I can get a summary so I can understand the fix! pic.twitter.com/qMnIKfr452

I went from feeling like I'd need to spend a lot of time learning each of these technologies in-and-out to be productive, to feeling like I could build anything I set my mind to.

As my codebase got bigger, I had more pages and I started breaking things down into React components across files and using ChatGPT stopped working well. It was a lot of copy-pasting to explain what I wanted - which was funny considering the purpose of my project was to eliminate that.

Cursor IDE

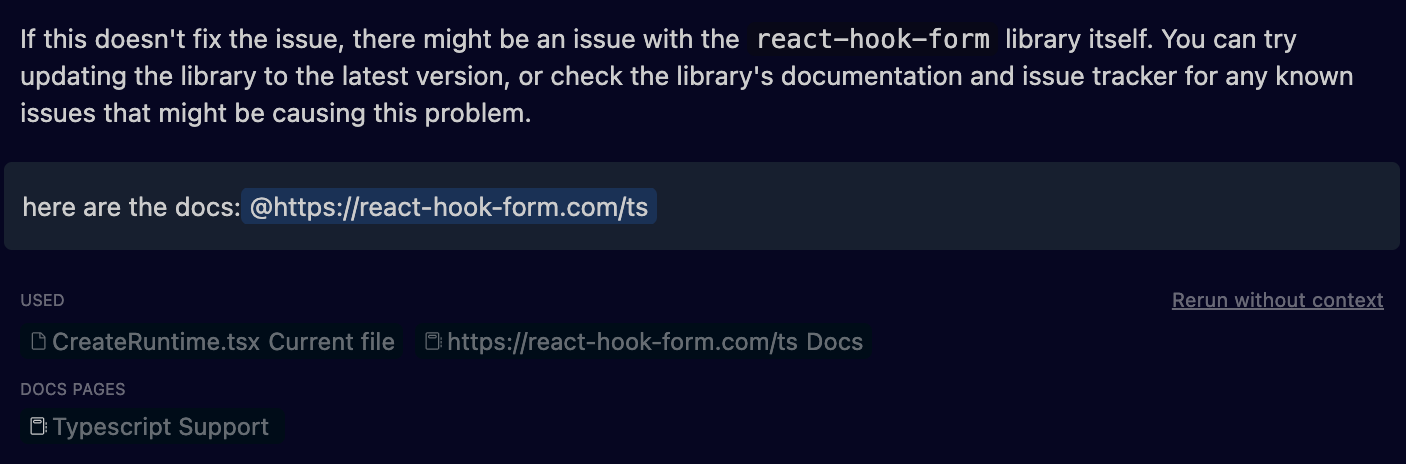

I'd read about Cursor on Twitter, so I decided I'd give that a go. It's essentially VSCode with the ability to query GPT-4 directly from within the editor.

Things that are great:

- You don't have to carefully prompt it, it knows it's an IDE.

- It's taking your current file as context, when you ask questions.

- Your whole codebase can also be used as context.

- You can add specific lines of code to the prompt with a keyboard shortcut.

- You can reference specific lines of code by referring to LOC numbers, which can be useful when writing more specific questions.

- You can @reference different files.

- You can @reference documentation, and BYO documentation by adding it. Cursor will index it.

Things that are less great:

- Breakdowns of what queries "cost" are difficult to estimate.

- I get the feeling whole-codebase queries are expensive, and I avoid them.

- GPT-3.5 is available, but I had a much worse experience with it and rarely bothered using it unless it was for refactors.

- @referencing single files is nice, and makes forming questions simple. While I suppose it's a generally good idea to keep your components/files/pages small and focused, I did occasionally feel like I was prematurely optimizing (and perhaps complicating) my codebase along that path in order to make it easier to ask questions.

- It has an inline-code-writer, inside the editor. I didn't use this too much, as I found it to be a bit clunky. I preferred the Chat.

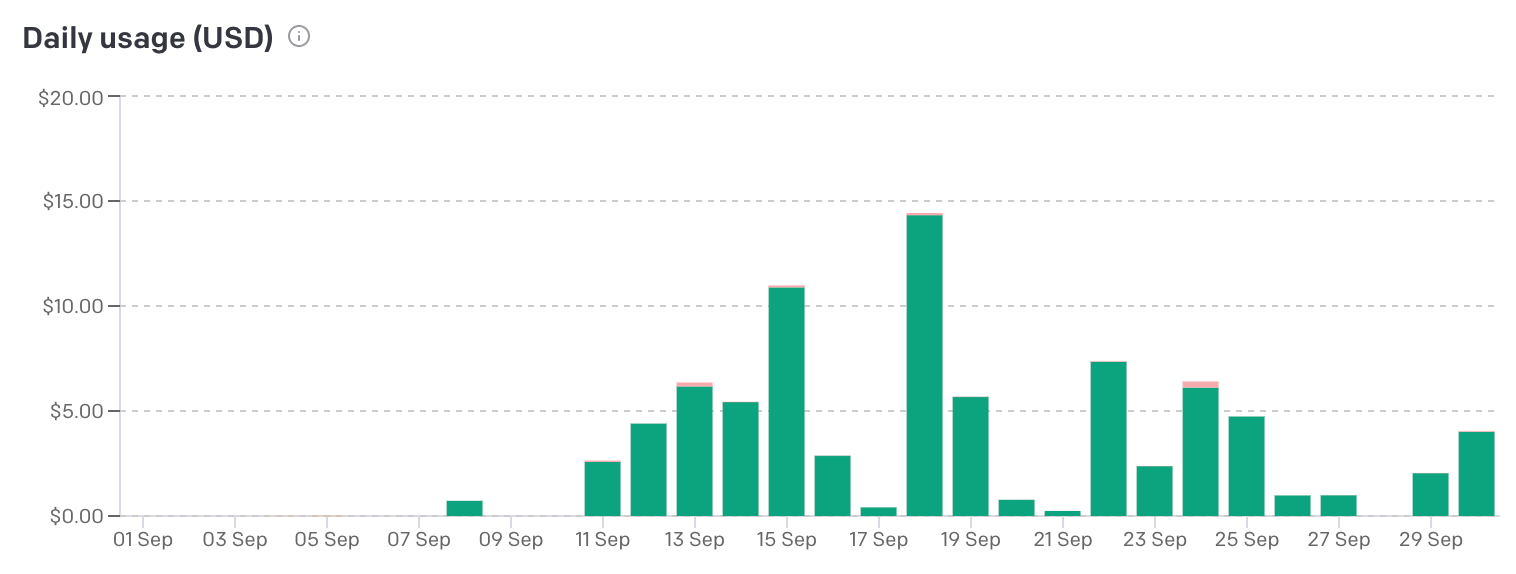

Overall I'm really impressed with Cursor. I chose to bring my own API key vs subscribing to Cursor. I spent under $100 on the whole project.

Typescript

I chose to use Typescript because I felt it made it easier for GPT-4 and Cursor to actually help me. I figured I'd call this out given the recent discussions around it being a burden.

- Supabase (more on that in a minute) generates types for my database, which means I have the database schema in my codebase.

- I didn't want to accidentally introduce bugs I knew I could avoid with type-safety - and I think I learned more by being forced to think about types.

- Ultimately I did use Cursors "AI Fix" button a lot to resolve type problems for me, so it didn't feel like a huge burden to do so.

Supabase, Vercel

I didn't want to roll my own auth, and Supabase Auth was easy to get started with. Edge Functions are sweet, and I used them to proxy my OpenAI calls and hide my API key from the client. It's all Deno/Typescript, so one less thing to learn.

Postgres and PostgREST are real easy to use. Ultimately it means I don't need to roll my own backend, Supabase handles the important stuff for me. Maybe the Edge Functions setup stops working for me someday, but there are few hurdles to me moving that to a "real" server + just connecting to Supabase like a normal database.

Vercel seemed like the obvious choice given I was working with NextJS. It was really easy to get started with; it deploys automatically when I push to main. I have no complaints.

No design?

I don't regret my rule about no design up front, but I definitely felt like I focused a bit more on "make it work" vs "make it look great" in the absence of any up-front thinking about what I'd want it to look like.

Having no designs up front meant I spent a bunch of time re-working my Create Runtime page. I didn't realize the importance of users being able to run a Runtime that didn't really "exist" yet, to iterate and finalize a prompt template, until I'd built a bunch of it. That was double-complicated because the Runtime isn't executed from the client, it's run in a Supabase edge function.

I chose this rule because I wanted to focus entirely on writing code. Plus, it's interesting to see what you can chisel out of the rock.

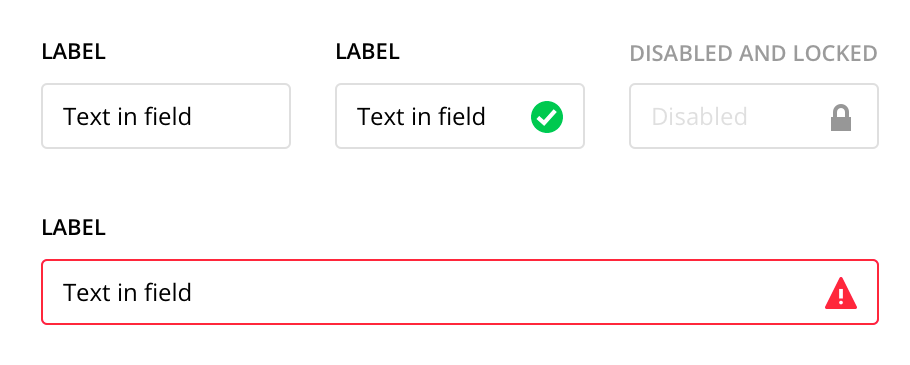

I chose shadcn/ui for my frontend components. I think that was a wise choice? It allowed me to move more quickly, and I didn't spend a bunch of time fiddling with UI components.

In future, I think I'd spend some more time sketching things out in Whimsical - not high fidelity, but just enough that I had a bit of a better picture of where I was going.

Some things that tripped me up

I used NextJS App Router, and not Pages. This was a problem often, as GPT-4 would give me a bunch of suggestions that were Pages-focused. Simple to fix, but something I had to keep an eye on.

I'm excited to try out v0.dev because I think they're on the right track. Right now, I don't think GPT-4 is great at interpreting what I want to accomplish and helping me write CSS. When making changes to existing UI's, I found myself digging through the Tailwind/MDN docs more than asking GPT-4.

I didn't know much about React state management, context providers, etc. before starting. I think if I were to do it again, I'd read up on that. I made some architectural errors early on that weren't wrong so I struggled to ask GPT-4 to help me fix them.

Prompting tips

I would occasionally find myself challenging GPT-4 to find another solution. Being specific about what you don't want to do is also useful.

Whenever I'd finish a bigger piece of work, I'd ask GPT-4 to critique it by telling it what I intended for it to do, and if there were any suggestions for improvements. I'd often get things about naming conventions, abstracting things out into different components, or refactoring my state management to be a bit more elegant.

Cursor has Chats which I kept to a single topic. When working in those, I'd avoid mixing up the desired outcome of my chats - if I wanted to add something new, that was one chat. If I wanted to refactor that piece of work, I'd open a new chat, not continue the existing one. I found I got clearer answers.

Referring to pieces of code as LOC when writing prompts was much more natural than copy-pasting lots of code.

Asking GPT-4 to help you figure out how to debug things was helpful too. It'd tell me where to add console.log() or how to identify why things weren't working as expected.

When making some more fiddly changes, I found myself saying "write all of the code in this document to include this change so I can copy-paste it." Lazy, but useful!

Overall

I'm impressed with how far I managed to get. Using GPT-4 removed a mental barrier for me - I can sit down and make a bit of progress on the idea that is important to me. I'm not going to be down a rabbit-hole of tutorials and documentation.

It feels like the space is moving so quickly. I can only presume the costs will come down quickly, tools like v0 and Cursor will continue to improve and make me even more productive. I'm not sure what I'll build next - but it makes me feel more ambitious.

If you've ever wanted to learn to code/build something: it feels like now is an incredible time to do so. If you can describe what you want, you can code.